AI Workforce Strategy & Human-Centred Adoption

AI is reshaping how work gets done across infrastructure, energy, defence, rail and technology. But most organisations are still treating it as a technology programme when, in fact, AI adoption will succeed or fail on how people experience, trust and use it and on how well organisations manage the legal and regulatory risks that sit behind those behaviours.

Turning AI into a workforce advantage, not a workforce risk

As organisations accelerate AI adoption, most are still asking what technology to buy; far fewer are asking how their people will respond to it. Morson Edge sits in a space most organisations now urgently need, but few providers genuinely occupy. From our behavioural research base, we help you measure and interpret the human dynamics of AI adoption how people feel about AI in their roles, how they are likely to approach or resist integration, and the level of readiness and trust that will make or break rollout.

These factors are not “soft”. Workforce sentiment and behavioural readiness directly shape AI ROI, capital investment risk, and even your standing as an employer of choice. Understanding the human experience of AI is not a nice to have; it is a critical input for sustainable transformation and board level assurance.

Unlike generic AI maturity narratives, our approach is grounded in organisational psychology and operational risk and is explicitly designed for high stakes environments where safety, regulatory scrutiny and expert talent matter.

The reality

AI adoption is now a workforce issue not just a technology one. Across high-stakes sectors, the same patterns are emerging.

- AI is being introduced faster than people can adapt

- Trust in AI outputs is inconsistent or misunderstood

- Skills are shifting, but pathways are unclear

- Ethical and accountability risks are rising

- Early signs of stress, resistance, or over-reliance are appearing

- AI induced anxiety impacting innovation and ability to learn new skill

Left unmanaged, this leads to reduced productivity and innovation, increased risk, workforce disengagement, poor return on AI investment and even risk of formal litigation.

Our approach

We sit at the intersection of workforce strategy, behavioural science, and responsible AI.

We help organisations adopt AI in a way that strengthens performance, protects wellbeing, and withstands regulatory scrutiny by placing human behaviour at the centre.

Our approach combines:

- Workforce insight: how different groups experience and respond to AI

- Ethics and governance: embedding responsibility into real decisions, consequential decision oversight

- Behavioural science: addressing trust, anxiety, and over-reliance

This creates a practical path from diagnosis through to sustainable, real-world adoption.

Start with foundational insight

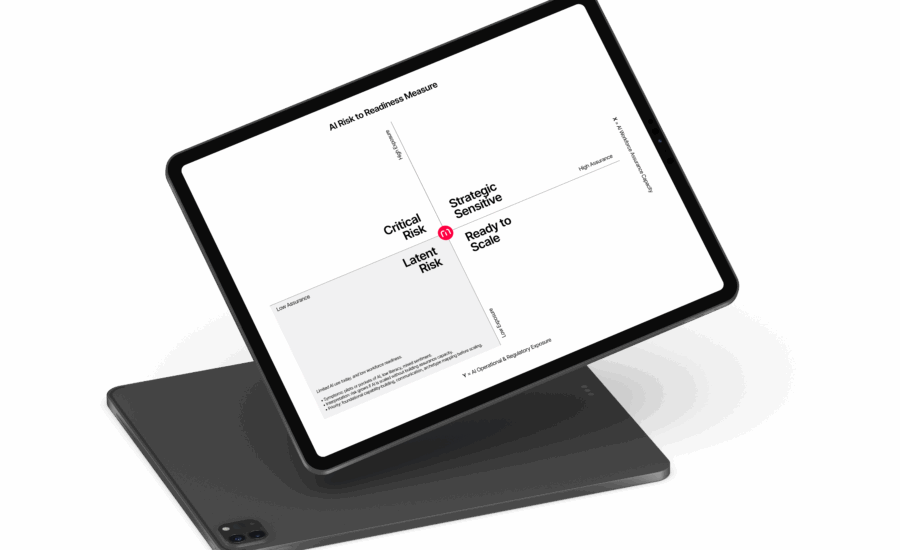

The Morson AI Risk-to-Readiness Measure

The ‘Morson AI Workforce Risk-to-Readiness Measure’ is the foundation of our approach. It provides a structured, evidence-based diagnostic of how ready your organisation is to adopt AI and where risk is building. We measure two critical dimensions:

- AI Workforce Assurance Capacity

Your ability to implement AI safely, sustainably, and with workforce support.

- AI Operational & Regulatory Exposure

The level of workforce, cultural, and governance risk introduced by AI.

Unlike traditional AI readiness tools, we focus on the human system around the technology. This includes:

- Trust in AI and decision-making confidence

- Psychological safety to challenge AI outputs

- Technostress and cognitive overload

- Identity disruption and role impact

- Governance clarity and accountability

- Regulatory and compliance exposure

What You Get

- A clear position on the AI Risk-to-Readiness spectrum

- Workforce archetypes and behavioural insights

- Identification of high-risk roles, functions, and scenarios

- A prioritised action plan to improve readiness and reduce risk

Example: An organisation may have strong AI capability but low workforce trust and high role disruption creating a high-risk environment for failed adoption without intervention.

From diagnosis to delivery

We support organisations across three connected service areas:

AI Archetype Mapping

Morson’s proprietary AI Archetype Mapping is a behavioural diagnostic that segments your workforce into four AI mindsets – Zoomers, Bloomers, Gloomers and Doomers – showing how different groups think, feel and behave around AI at work.

We help you see where enthusiasm, caution and technostress cluster, who will lead or stress‑test adoption, and who is most at risk of resistance or shadow AI use. Archetypes are mapped against variables such as age, discipline and function so you stop treating the workforce as a single audience.

You get a quantified AI readiness map and risk profile by archetype and segment, plus targeted recommendations for communication, training, governance and leadership. Leaders gain a practical narrative for boards and EXCO on where to move fast and where to invest in trust, skills and guardrails first.

Outcome: you move from guesswork to evidence‑based action on readiness, risk and ROI – turning hidden human data into a strategic asset and enabling disciplined, enterprise‑level AI value.

AI Policy Writing

Most AI policies are written for systems, not people. They’re abstract, tech‑heavy and disconnected from how decisions are really made. Our human‑centric AI policy writing turns high‑level principles into clear, role‑specific guidance your workforce can understand, trust and apply under pressure.

We help you translate AI and data principles into plain‑language standards, define where AI is appropriate or prohibited, clarify accountability when AI is involved in decisions, and tailor expectations by role, seniority and risk profile. Policies are aligned with sector regulation, employment law and your wider governance framework.

You get usable AI use policies and standards, role‑based “AI playbooks” that show what good looks like, and practical guidance for leaders on reinforcing, monitoring and escalating issues across HR, IT, risk and operations.

Outcome: AI policies that are understandable, adoptable and enforceable, reducing ambiguity, strengthening accountability and providing a defensible line of sight from board‑level principles to day‑to‑day practice.

Behavioural Risk Profiling

As AI tools become more accessible, “shadow AI” – unapproved, ungoverned use of AI to get work done – is inevitable. Traditional controls rarely see it until something goes wrong. Our behavioural risk profiling gives you early visibility of where and why it is most likely to emerge.

We profile workforce segments by attitude to AI (enthusiastic, cautious, resistant, over‑reliant), identify behavioural drivers such as time pressure or policy irrelevance, and map where unapproved tools are most likely to appear across roles, functions and locations.

You get a behavioural risk map highlighting shadow AI propensity, segment‑level insight into motivations and pressure points, and targeted recommendations for controls, communication, training and leadership focus.

Outcome: proactive control of AI‑related behavioural risk, reducing unapproved AI use, safety incidents, data leakage and compliance breaches, while preserving the curiosity and experimentation you actually want.

Business impact

Our AI toolkit is designed for senior leaders responsible for performance, risk, and transformation – from CEOs and COOs, Chief People Officers, Chief Risk Officers, Chief Technology / AI Leaders to Strategy and Transformation Directors and supports boards, risk committees, and compliance functions overseeing AI adoption.

AI only delivers value when it is trusted, adopted and used safely in real workflows. When workforce risk is ignored, organisations don’t just miss ROI they increase the likelihood of grievances, tribunal claims and regulatory scrutiny tied to AI driven decisions.

By surfacing psychosocial risk, bias, governance gaps and unsafe patterns of AI use, we help you intervene before they crystallise into discrimination claims, unfair dismissal cases, data protection breaches, or noncompliance with emerging AI regulation.

Unmanaged stress, anxiety and identity disruption around AI can drive mental health issues, formal complaints and dismissal claims. By addressing these factors early, you protect both workforce wellbeing and your organisation’s legal position.

Leaders gain an evidence base that links AI adoption to workforce impact, risk and control effectiveness. This supports clearer lines of accountability, more robust documentation, and stronger assurance to boards, auditors and regulators that AI is being deployed responsibly.

Why Morson Edge

Workforce first, not tech first

We bring human centred, research led and risk aligned expertise together: organisational psychology, AI adoption science, behavioural insight and sector specific workforce knowledge.

Making legal risk visible

We make litigation and regulatory exposure visible, linking workforce dynamics to discrimination risk, unfair dismissal, psychosocial harm, data protection failures and emerging AI regulation.

Archetypes, not averages

We use AI workforce archetype mapping to show how different groups use, trust and sometimes misuse AI, pinpointing where operational, ethical and legal risk is concentrated and where it is safest to scale.

From diagnosis to action

We move from diagnosis into guardrails, role and workforce redesign, skills realignment, and culture and change support that stand up under legal and regulatory scrutiny.

Built for high stakes environments

We are built for high stakes sectors with deep experience across nuclear, defence, rail, infrastructure, energy, engineering and advanced technology where decisions carry real world consequences.

Strategy connected to delivery

We connect strategy to delivery, leveraging Morson’s wider ecosystem in recruitment, contingent labour, project delivery and training so you can redeploy skills, hire differently and build capability at scale.

Think sharper.

Insights, ideas, and intelligence. Where smart thinking meets real-world impact.